This article was originally published on cerbero-blog.com on October the 7th, 2013.

While this PoC is about static analysis, it’s very different than applying a packer to a malware. OS X uses an internal mechanism to load encrypted Apple executables and we’re going to exploit the same mechanism to defeat current anti-malware solutions.

OS X implements two encryption systems for its executables (Mach-O). The first one is implemented through the LC_ENCRYPTION_INFO loader command. Here’s the code which handles this command:

case LC_ENCRYPTION_INFO:

if (pass != 3)

break;

ret = set_code_unprotect(

(struct encryption_info_command *) lcp,

addr, map, slide, vp);

if (ret != LOAD_SUCCESS) {

printf("proc %d: set_code_unprotect() error %d "

"for file \"%s\"\n",

p->p_pid, ret, vp->v_name);

/* Don't let the app run if it's

* encrypted but we failed to set up the

* decrypter */

psignal(p, SIGKILL);

}

break; This code calls the set_code_unprotect function which sets up the decryption through text_crypter_create:

/* set up decrypter first */

kr=text_crypter_create(&crypt_info, cryptname, (void*)vpath); The text_crypter_create function is actually a function pointer registered through the text_crypter_create_hook_set kernel API. While this system can allow for external components to register themselves and handle decryption requests, we couldn’t see it in use on current versions of OS X.

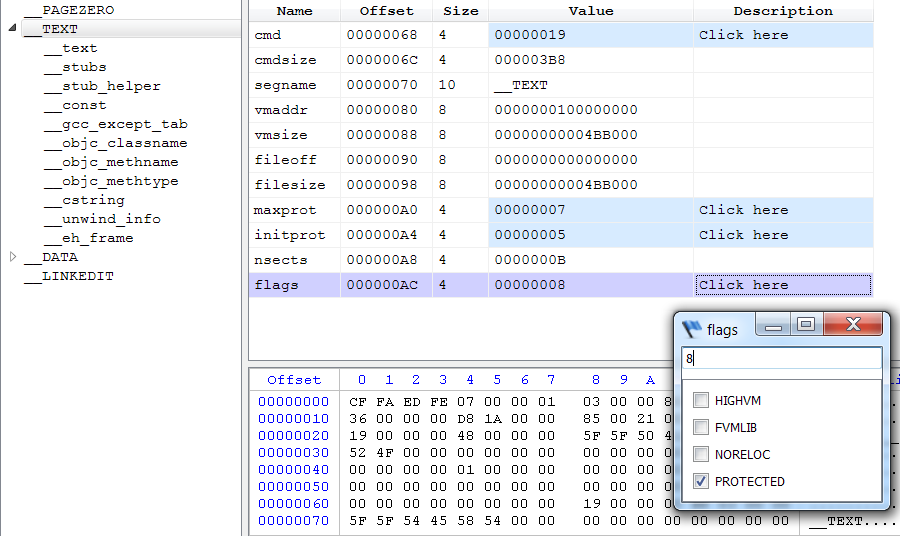

The second encryption mechanism which is actually being used internally by Apple doesn’t require a loader command. Instead, it signals encrypted segments through a flag.

The ‘PROTECTED‘ flag is checked while loading a segment in the load_segment function:

if (scp->flags & SG_PROTECTED_VERSION_1) {

ret = unprotect_segment(scp->fileoff,

scp->filesize,

vp,

pager_offset,

map,

map_addr,

map_size);

} else {

ret = LOAD_SUCCESS;

} The unprotect_segment function sets up the range to be decrypted, the decryption function and method. It then calls vm_map_apple_protected.

#define APPLE_UNPROTECTED_HEADER_SIZE (3 * PAGE_SIZE_64)

static load_return_t

unprotect_segment(

uint64_t file_off,

uint64_t file_size,

struct vnode *vp,

off_t macho_offset,

vm_map_t map,

vm_map_offset_t map_addr,

vm_map_size_t map_size)

{

kern_return_t kr;

/*

* The first APPLE_UNPROTECTED_HEADER_SIZE bytes (from offset 0 of

* this part of a Universal binary) are not protected...

* The rest needs to be "transformed".

*/

if (file_off <= APPLE_UNPROTECTED_HEADER_SIZE &&

file_off + file_size <= APPLE_UNPROTECTED_HEADER_SIZE) {

/* it's all unprotected, nothing to do... */

kr = KERN_SUCCESS;

} else {

if (file_off <= APPLE_UNPROTECTED_HEADER_SIZE) {

/*

* We start mapping in the unprotected area.

* Skip the unprotected part...

*/

vm_map_offset_t delta;

delta = APPLE_UNPROTECTED_HEADER_SIZE;

delta -= file_off;

map_addr += delta;

map_size -= delta;

}

/* ... transform the rest of the mapping. */

struct pager_crypt_info crypt_info;

crypt_info.page_decrypt = dsmos_page_transform;

crypt_info.crypt_ops = NULL;

crypt_info.crypt_end = NULL;

#pragma unused(vp, macho_offset)

crypt_info.crypt_ops = (void *)0x2e69cf40;

kr = vm_map_apple_protected(map,

map_addr,

map_addr + map_size,

&crypt_info);

}

if (kr != KERN_SUCCESS) {

return LOAD_FAILURE;

}

return LOAD_SUCCESS;

} Two things about the code above. The first 3 pages (0x3000) of a Mach-O can't be encrypted/decrypted. And, as can be noticed, the decryption function is dsmos_page_transform.

Just like text_crypter_create even dsmos_page_transform is a function pointer which is set through the dsmos_page_transform_hook kernel API. This API is called by the kernel extension "Dont Steal Mac OS X.kext", allowing for the decryption logic to be contained outside of the kernel in a private kernel extension by Apple.

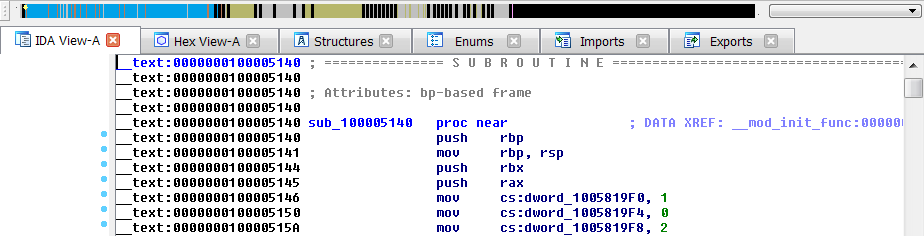

Apple uses this technology to encrypt some of its own core components like "Finder.app" or "Dock.app". On current OS X systems this mechanism doesn't provide much of a protection against reverse engineering in the sense that attaching a debugger and dumping the memory is sufficient to retrieve the decrypted executable.

However, this mechanism can be abused by encrypting malware which will no longer be detected by the static analysis technologies of current security solutions.

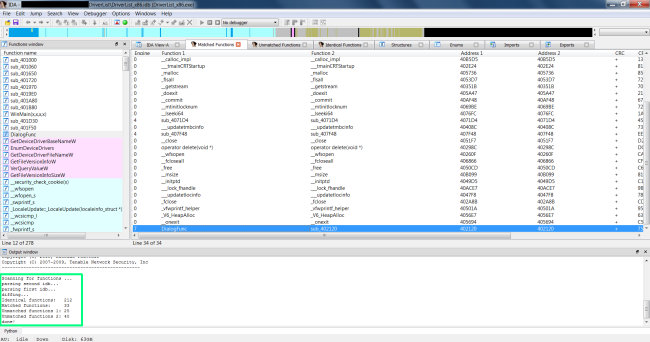

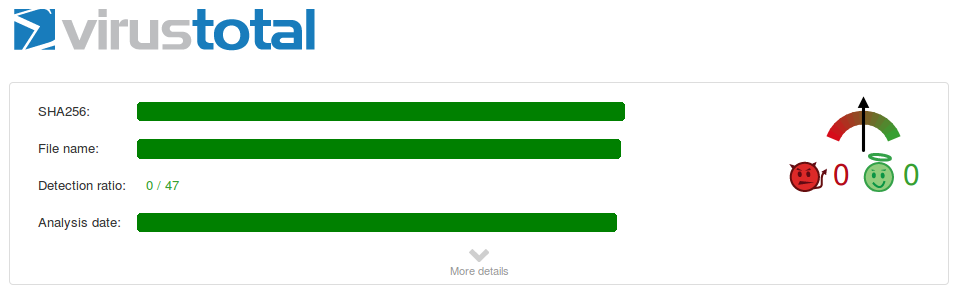

To demonstrate this claim we took a known OS X malware:

Since this is our public disclosure, we will say that the detection rate stood at about 20-25.

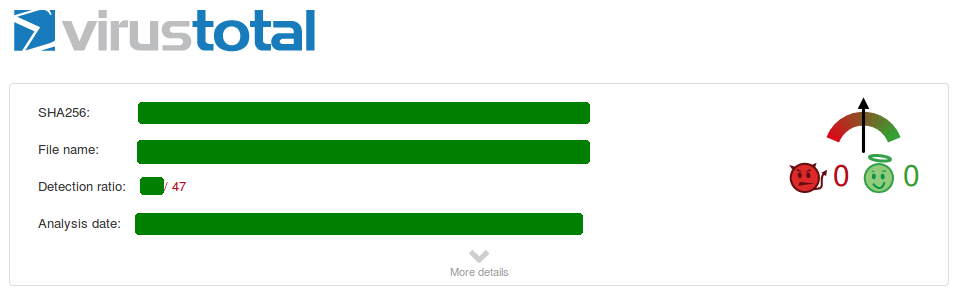

And encrypted it:

After encryption has been applied, the malware is no longer detected by scanners at VirusTotal. The problem is that OS X has no problem in loading and executing the encrypted malware.

The difference compared to a packer is that the decryption code is not present in the executable itself and so the static analysis engine can't recognize a stub or base itself on other data present in the executable, since all segments can be encrypted. Thus, the scan engine also isn't able to execute the encrypted code in its own virtual machine for a more dynamic analysis.

Two other important things about the encryption system is that the private key is the same and is shared across different versions of OS X. And it's not a chained encryption either: but per-page. Which means that changing data in the first encrypted page doesn't affect the second encrypted page and so on.

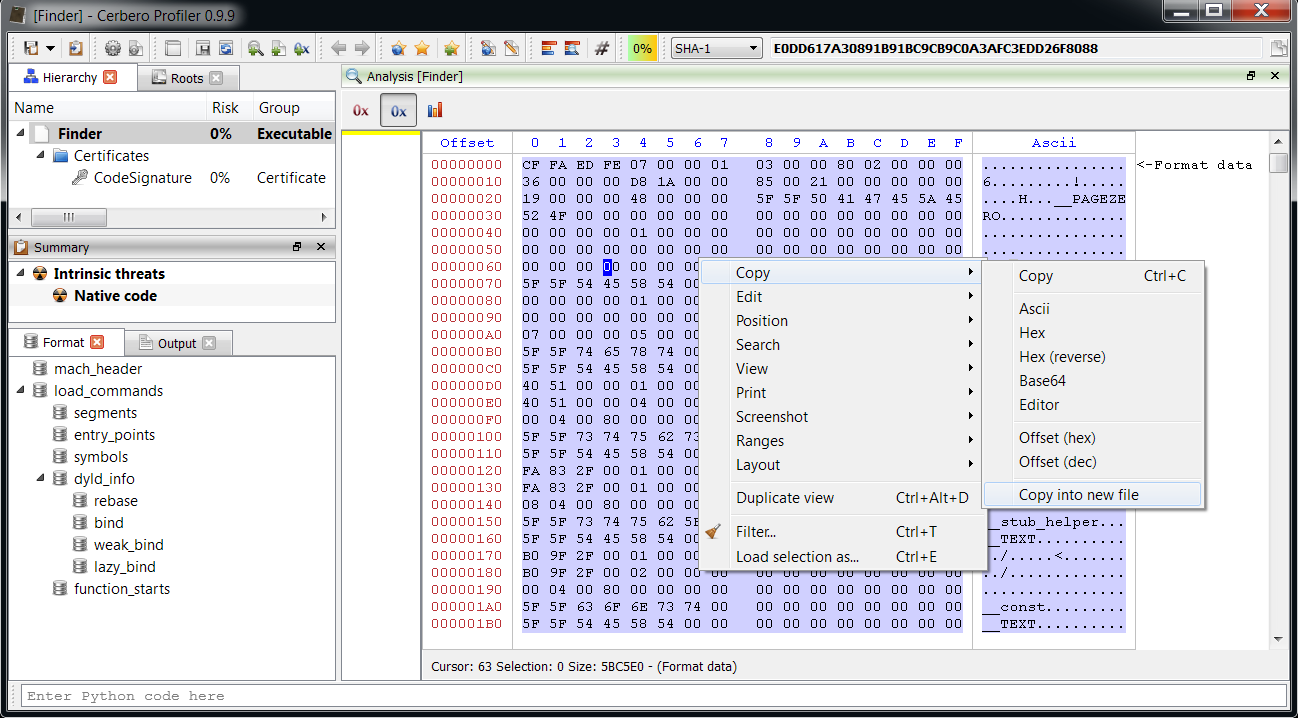

Our flagship product, Cerbero Profiler, which is an interactive file analysis infrastructure, is able to decrypt protected executables. To dump an unprotected copy of the Mach-O just perform a “Select all” (Ctrl+A) in the main hex view and then click on “Copy into new file” like in the screen-shot below.

The saved file can be executed on OS X or inspected with other tools.

Of course, the decryption can be achieved programmatically through our Python SDK as well. Just load the Mach-O file, initialize it (ProcessLoadCommands) and save to disk the stream returned by the GetStream.

A solution to mitigate this problem could be one of the following:

- Implement the decryption mechanism like we did.

- Check the presence of encrypted segments. If they are present, trust only executables with a valid code signature issued by Apple.

- 3. Check the presence of encrypted segments. If they are present, trust only executables whose cryptographic hash matches a trusted one.

This kind of internal protection system should be avoided in an operating system, because it can be abused.

After we shared our internal report, VirusBarrier Team at Intego sent us the following previous research about Apple Binary Protection:

http://osxbook.com/book/bonus/chapter7/binaryprotection/

http://osxbook.com/book/bonus/chapter7/tpmdrmmyth/

https://github.com/AlanQuatermain/appencryptor

The research talks about the old implementation of the binary protection. The current page transform hook looks like this:

if (v9 == 0x2E69CF40) // this is the constant used in the current kernel

{

// current decryption algo

}

else

{

if (v9 != 0xC2286295)

{

// ...

if (!some_bool)

{

printf("DSMOS++: WARNING -- Old Kernel\n");

++some_bool;

}

}

// old decryption algo

} VirusBarrier Team also reported the following code by Steve Nygard in his class-dump utility:

https://bitbucket.org/nygard/class-dump/commits/5908ac605b5dfe9bfe2a50edbc0fbd7ab16fd09c

This is the correct decryption code. In fact, the kernel extension by Apple, just as in the code above provided by Steve Nygard, uses the OpenSSL implementation of Blowfish.

We didn't know about Nygard's code, so we did our own research about the topic and applied it to malware. We would like to thank VirusBarrier Team at Intego for its cooperation and quick addressing of the issue. At the time of writing we're not aware of any security solution for OS X, apart VirusBarrier, which isn't tricked by this technique. We even tested some of the most important security solutions individually on a local machine.

The current 0.9.9 version of Cerbero Profiler already implements the decryption of Mach-Os, even though it's not explicitly written in the changelist.

We didn't implement the old decryption method, because it didn't make much sense in our case and we're not aware of a clean way to automatically establish whether the file is old and therefore uses said encryption.

These two claims need a clarification. If we take a look at Nygard's code, we can see a check to establish the encryption method used:

#define CDSegmentProtectedMagic_None 0

#define CDSegmentProtectedMagic_AES 0xc2286295

#define CDSegmentProtectedMagic_Blowfish 0x2e69cf40

if (magic == CDSegmentProtectedMagic_None) {

// ...

} else if (magic == CDSegmentProtectedMagic_Blowfish) {

// 10.6 decryption

// ...

} else if (magic == CDSegmentProtectedMagic_AES) {

// ...

} It checks the first dword in the encrypted segment (after the initial three non-encrypted pages) to decide which decryption algorithm should be used. This logic has a problem, because it assumes that the first encrypted block is full of 0s, so that when encrypted with AES it produces a certain magic and when encrypted with Blowfish another one. This logic fails in the case the first block contains values other than 0. In fact, some samples we encrypted didn't produce a magic for this exact reason.

Also, current versions of OS X don't rely on a magic check and don't support AES encryption. As we can see from the code displayed at the beginning of the article, the kernel doesn't read the magic dword and just sets the Blowfish magic value as a constant:

crypt_info.crypt_ops = (void *)0x2e69cf40; So while checking the magic is useful for normal cases, security solutions can't rely on it or else they can be easily tricked into using the wrong decryption algorithm.